Assigned Entries

571

AI encyclopedia entries tagged with this learning stage.

AI Learning Stage

Study safety, policy, ecosystem dynamics, and emerging research directions.

Assigned Entries

AI encyclopedia entries tagged with this learning stage.

Recommended Starts

Curated starting entries defined in the learning path metadata.

Evaluate real-world deployment risk, strategy, and long-horizon impact.

The current dataset assigns 571 entries to Stage 3: Frontier and Governance. The recommended entries below provide a narrower starting point if you want a manageable subset.

AI Safety ensures AI systems operate ethically, reliably, and without unintended consequences, aligning their goals with human values and societal norms.

Stuart Russell is a prominent computer scientist and AI researcher, co-author of a foundational AI textbook. He is a leading advocate for AI safety, focusing on aligning AI systems with human values to ensure beneficial.

Nick Bostrom is a notable figure linked to AI Safety, and is included in this encyclopedia to connect ideas to the people who advanced them.

Superintelligence refers to an artificial intelligence system that surpasses human intelligence across all domains, a concept central to discussions in AI safety and existential risk.

Existential risk from AI refers to the potential for advanced AI systems to pose catastrophic risks to humanity, often linked to the alignment problem where AI goals may not match human values.

The Alignment Problem refers to the challenge of ensuring AI systems' objectives and behaviors align with human values and ethical standards to prevent existential risks.

Value alignment in AI ensures systems' objectives align with human values, addressing the alignment problem through methods like inverse reinforcement learning to prevent existential risks.

Inverse Reinforcement Learning for Alignment is a method where AI learns human values and goals by observing human actions, aiming to align AI behavior with human intentions.

Cooperative Inverse Reinforcement Learning (CIRL) is a framework where an AI infers a human's intended reward function through observation and interaction, even when the human's demonstrations are suboptimal. This collaborative process aims to align AI.

Corrigibility is an AI system's capacity to allow its goals or behavior to be safely modified or corrected by human operators, even if it has instrumental reasons to resist such changes. It ensures human control.

Interruptibility is an AI's design feature allowing external human operators to reliably stop or modify its current task or goal pursuit at any point. This ensures human control and prevents unintended or harmful autonomous actions.

Instrumental convergence is the tendency for advanced AI systems, regardless of their ultimate objective, to develop similar sub-goals like self-preservation, resource acquisition, and self-improvement, as these are instrumentally useful.

AI Hub

This hub connects the main AI learning surfaces on Lexicon Labs into one path: the encyclopedia preview, student-friendly books, themed bundles, and the tools that help readers turn concepts into working understanding.

Open GuidePaperback Hub

This page groups together Lexicon Labs paperback titles that help younger readers understand artificial intelligence, computation, and the people behind modern computing.

Open GuideScore options by weighted criteria to make strategy and career decisions with clarity.

Open ToolCreate citations for papers fast with APA/MLA formatting and copy-ready output.

Open ToolAnalyze clarity in essays, emails, and articles with readability scores and instant issue flags.

Open Tool

A student-friendly intro to AI concepts, real-world use cases, and practical skills for the next generation.

View Paperback

A biography of Alan Turing, the trailblazing mathematician and codebreaker whose ideas shaped modern computing and artificial intelligence.

View Paperback

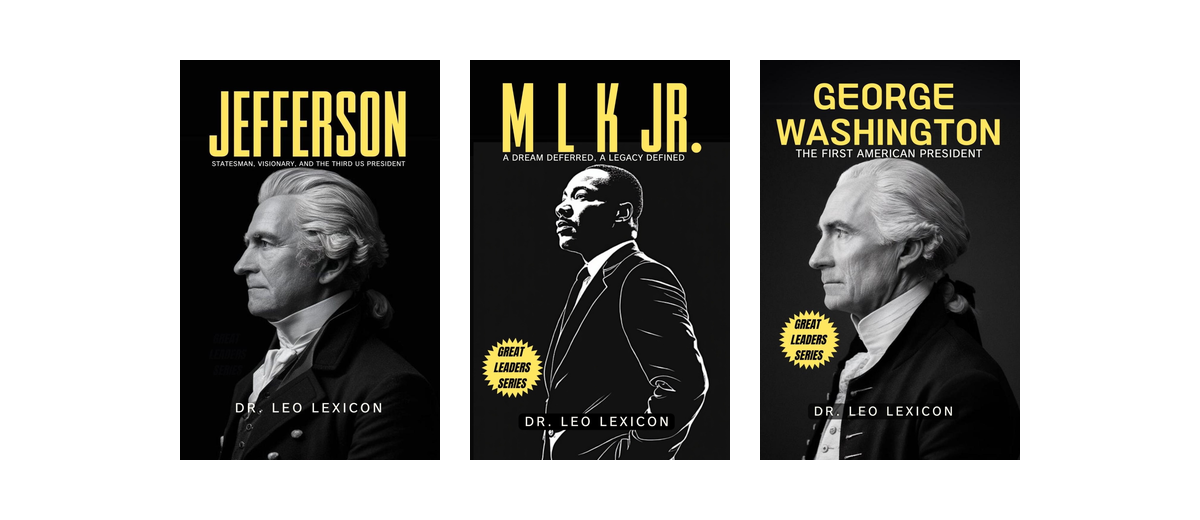

A concise look at George Washington's leadership, decision-making, and role in shaping early American history.

View Paperback

Books that explain artificial intelligence clearly for young and curious readers.

View Bundle

A curated collection of books in the Leadership Bundle series, designed for curious, independent readers.

View Bundle