Entries

330

Lexicon entries typed as hardware.

AI Entry Type

This page groups the hardware entries from the Lexicon Labs AI encyclopedia into one indexable landing page.

Entries

Lexicon entries typed as hardware.

Top Categories

Topic areas where this entry type appears most often.

The current lexicon contains 330 entries of type hardware. This makes the page useful as a quick orientation layer for readers who want one kind of AI object rather than one subject area.

The category breakdown below shows where this entry type appears most often across the broader AI taxonomy.

150 hardware entries in this category.

140 hardware entries in this category.

26 hardware entries in this category.

5 hardware entries in this category.

4 hardware entries in this category.

Von Neumann Architecture is a computer design where program instructions and data are stored together in a single, shared memory unit. This unified storage allows the CPU to access both efficiently, forming the basis of.

Deep Architectures are multi-layered computational models, typically deep neural networks, designed with many processing layers to automatically learn intricate patterns and representations from vast amounts of data.

Network architectures define the specific layer arrangements, connections, and operations within an artificial neural network. They dictate how data flows and transforms to learn patterns, forming the blueprint for AI models.

Neural Architecture Search (NAS) is an automated technique that designs optimal neural network architectures. It uses algorithms to explore and evaluate various network configurations, finding the most efficient models for specific tasks.

ENAS is a neural architecture search method that efficiently discovers optimal deep learning model structures. It uses a controller to learn and share parameters across child models, significantly reducing computational cost compared to traditional NAS.

Transformer Architecture is a neural network design, introduced in 2017, that uses self-attention mechanisms and positional encoding to process sequences in parallel. It revolutionized natural language processing by efficiently handling long-range dependencies.

GPU Computing harnesses Graphics Processing Units (GPUs) to perform numerous calculations in parallel. This method dramatically accelerates complex computational tasks, especially for AI model training, scientific simulations, and large-scale data processing.

The NVIDIA H100 is a powerful graphics processing unit (GPU) designed for accelerating artificial intelligence workloads, high-performance computing, and data center operations. It features Hopper architecture for significant performance gains over previous generations.

The NVIDIA A100 is a powerful data center GPU, based on the Ampere architecture, designed for AI training, inference, and high-performance computing. It features Tensor Cores for accelerated matrix operations, crucial for deep learning workloads.

The NVIDIA GH200 Grace Hopper Superchip combines a Grace CPU and a Hopper H100 GPU with high-bandwidth memory. It's designed for demanding AI and high-performance computing, accelerating large model training and inference.

The Kunlun Chip is Baidu's proprietary AI accelerator hardware, engineered for high-performance deep learning computation in cloud and edge environments. It powers various AI services within Baidu's ecosystem.

Tesla Architecture was NVIDIA's first unified shader model GPU architecture, introduced in 2006. It significantly advanced parallel processing capabilities, laying groundwork for modern GPU computing beyond graphics.

AI Hub

This hub connects the main AI learning surfaces on Lexicon Labs into one path: the encyclopedia preview, student-friendly books, themed bundles, and the tools that help readers turn concepts into working understanding.

Open GuidePaperback Hub

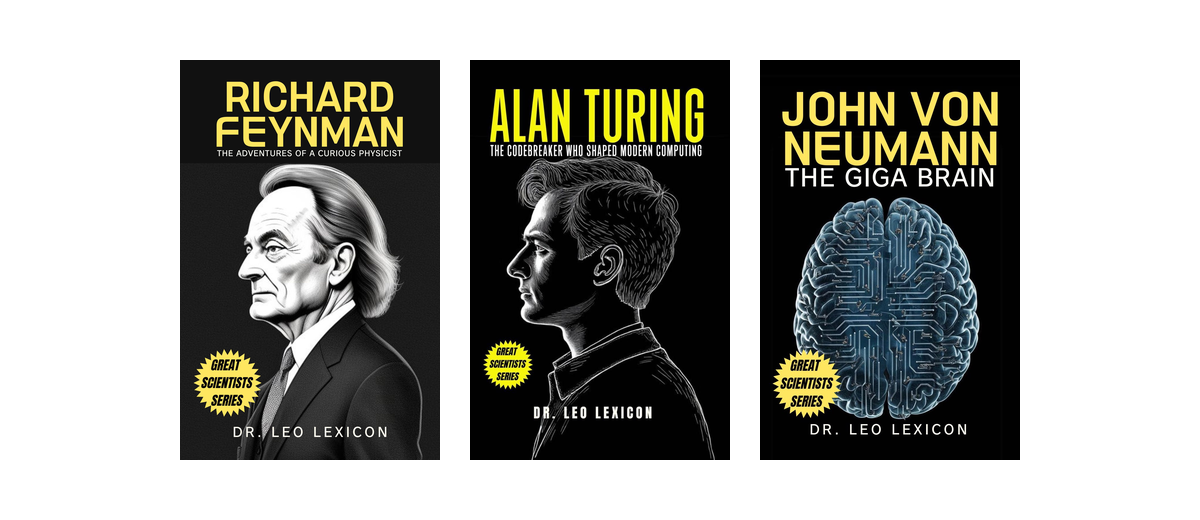

This page groups together Lexicon Labs paperback titles that help younger readers understand artificial intelligence, computation, and the people behind modern computing.

Open GuideTurn messy notes into study-ready flashcards and CSV exports for spaced repetition apps.

Open ToolTransform notes into visual diagrams and export them for sharing or studying.

Open ToolCreate citations for papers fast with APA/MLA formatting and copy-ready output.

Open ToolAnalyze clarity in essays, emails, and articles with readability scores and instant issue flags.

Open Tool

An accessible primer on quantum computing fundamentals, from qubits and superposition to real-world applications.

View Paperback

Learn core Python programming with approachable examples designed for teen learners and first-time coders.

View Paperback

Discover the ideas and influence of one of the most brilliant minds behind computing, game theory, and modern science.

View Paperback

A practical introduction to coding concepts for young learners and beginners.

View Bundle

Books that explain artificial intelligence clearly for young and curious readers.

View Bundle

Modern scientific minds who shaped computing and physics.

View Bundle