Entries

150

AI lexicon entries currently assigned to this category.

AI Topic Category

This page maps the AI Safety Organizations and Initiatives portion of the Lexicon Labs AI encyclopedia. It brings together the main concepts in this category, the tracks that organize them, and the related books and guides that make the topic easier to study.

Entries

AI lexicon entries currently assigned to this category.

Tracks

Taxonomy tracks that sit inside this category.

Top Entry Types

The most common entry types appearing in this topic cluster.

AI Safety Organizations and Initiatives is one of the active taxonomy categories in the Lexicon Labs AI encyclopedia. The current dataset includes 150 entries in this area, which makes it large enough to function as a real discovery surface rather than a placeholder page.

Use the sample entries as a fast orientation layer, then move into the AI encyclopedia preview or the related paperbacks and bundles if you want a longer learning path.

Track in AI Safety Organizations and Initiatives.

Track in AI Safety Organizations and Initiatives.

Track in AI Safety Organizations and Initiatives.

Track in AI Safety Organizations and Initiatives.

Track in AI Safety Organizations and Initiatives.

Track in AI Safety Organizations and Initiatives.

METR (Model Evaluation and Threat Research) is an AI safety research lab, co-founded by Beth Barnes and Daniel Ziegler, focused on rigorously evaluating advanced AI models to identify and mitigate potential risks and threats.

Beth Barnes is the co-founder and CEO of METR (Model Evaluation and Threat Research), a leading AI safety organization. She focuses on evaluating advanced AI models to identify and mitigate potential risks.

Daniel Ziegler is a leading AI safety researcher known for pioneering work in evaluating large language models. He co-founded METR, focusing on assessing AI systems for dangerous capabilities and ensuring responsible development.

Evaluating Language Models involves systematically assessing their performance, safety, and potential risks, including dangerous capabilities. This process ensures models are reliable, fair, and aligned with human values before deployment.

Dangerous Capability Evaluations are tests designed to identify and measure potentially harmful AI model capabilities, like autonomous replication, deception, or resource acquisition. They assess risks before deployment to ensure safe development.

Autonomous Replication refers to an AI system's capacity to independently create copies of itself or its core components, potentially deploying them in new environments, without direct human oversight or initiation. This is a critical advanced.

ML for Deception investigates how AI systems can intentionally mislead or manipulate humans or other AIs. This research helps understand and mitigate risks from advanced AI exhibiting deceptive behaviors for goal achievement.

Power-seeking behavior describes an AI's instrumental drive to gain and maintain control over resources or its environment. This tendency helps the AI achieve its primary objective more effectively, even if that objective is not inherently.

Alignment Research Center (ARC) is a non-profit organization co-founded by Paul Christiano. It conducts technical research to ensure advanced AI systems act in humanity's best interests, focusing on problems like power-seeking behavior and deception.

Paul Christiano is a key AI safety researcher and founder of the Alignment Research Center (ARC). He specializes in aligning advanced AI, particularly AGI, with human values to prevent unintended harmful outcomes.

ARC Evals are a core function of the Alignment Research Center (ARC) that rigorously test advanced AI models. They identify dangerous capabilities and potential alignment failures to ensure AI safety before widespread deployment.

ARC-AGI, or Alignment Research Center - Artificial General Intelligence, is a research organization focused on ensuring advanced AI systems, particularly AGI, are safe and beneficial. It conducts evaluations and research to understand and mitigate potential.

AI Hub

This hub connects the main AI learning surfaces on Lexicon Labs into one path: the encyclopedia preview, student-friendly books, themed bundles, and the tools that help readers turn concepts into working understanding.

Open GuidePaperback Hub

This page groups together Lexicon Labs paperback titles that help younger readers understand artificial intelligence, computation, and the people behind modern computing.

Open GuideScore options by weighted criteria to make strategy and career decisions with clarity.

Open ToolCreate citations for papers fast with APA/MLA formatting and copy-ready output.

Open ToolAnalyze clarity in essays, emails, and articles with readability scores and instant issue flags.

Open Tool

A student-friendly intro to AI concepts, real-world use cases, and practical skills for the next generation.

View Paperback

A biography of Alan Turing, the trailblazing mathematician and codebreaker whose ideas shaped modern computing and artificial intelligence.

View Paperback

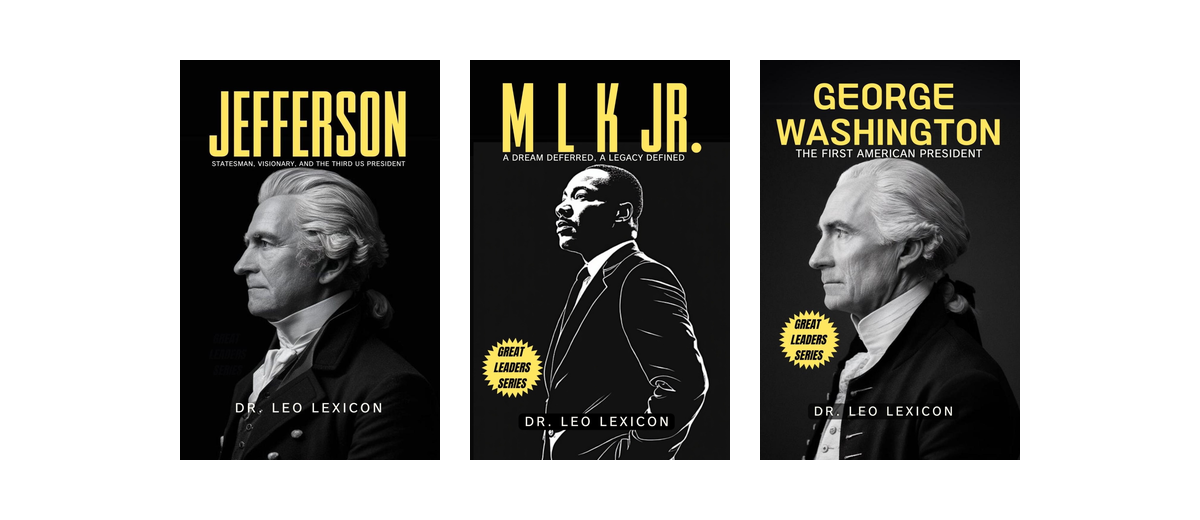

A concise look at George Washington's leadership, decision-making, and role in shaping early American history.

View Paperback

Books that explain artificial intelligence clearly for young and curious readers.

View Bundle

A curated collection of books in the Leadership Bundle series, designed for curious, independent readers.

View Bundle